In a previous blog post we discussed why explainable AI is so important and how different AI models fall short of helping humans understand the basis for their decisions. How can our modern-day AI marvels be so good at making predictions but so bad at helping us understand the basis for these decisions? Nowhere is this problem more pronounced than with the most complex AI model in existence today is the artificial neural network (ANN).

First, it is important to understand that an explanation for the output of any system can only be expressed in terms of the input variables to the system. You can have many inputs to a system, and some may have a greater impact on the output than others, but the explanation of the output must be expressed in terms of these inputs. Therefore, to understand the logical explanation behind any model, inputs must be preserved as they make their way to through system.

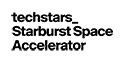

Neural Networks: Making a Smoothie with Data

A primary reason that neural networks are hard to explain is because they are destructive to input information. Let’s take a look at what is happening in a little more detail.

The image above shows the processing that goes on within each node of a neural network.

- First, every input node will sum all of the inputs to the system.

- Second, each node applies an activation function to the calculated sum. This function essentially changes the output into some other value depending on the magnitude of the sum.

- Finally, weights are calculated that determine how much of this node’s value should be passed to subsequent down-stream nodes.

The end result is a model that may perform extremely well, but that thoroughly scrambles up the inputs a sort of data blender. Input data is mixed in various proportions in a way that cannot be reversed. It is very difficult to quantify and assess the contribution of any single ingredient.

Additionally, ANNs are know for being deep, possessing many layers, each containing potentially hundreds of “blender” like functions which are fed the incomprehensible results from the previous “blended” layer. By the time you reach the output layer, the original inputs are completed unintelligible.

Note: See this article for more on how neural networks work

The Output Layer with a Neural Network

The final stage of a neural network is the output layer which is quite simple in operation. For every possible prediction, there is a corresponding node in the output layer and the output node with the highest value becomes the prediction of the system.

Think of the output layer as a series of lightbulbs. The brightest lightbulb becomes the prediction of the machine. However, due to the blender like operations that were done to reach this step, individual components of the input data can no longer be recognized and cannot help us understand the basis for the predications being made.

The Output Layer with Pattern-based ML: Preserving the data

At Natural Intelligence, we are focused on pattern-based AI for some very good reasons. One of the most important reasons is that pattern-based AI is non-destructive to the input data. In other words, we preserve the data all the way from the input of the system to the output prediction. We not only get a prediction, but we also get a ‘recipe’ of input ingredients for that prediction. Within this recipe is the underlying basis for the prediction

How we preserve this data integrity through our system is beyond the scope of this blog post, but rest assured we have taken great care to implement the same fundamental processing concepts exhibited by the human neocortex and how it responds to patterns. With this understanding it should be no surprise that our system exhibits more human like characteristics than mathematically based AI models.

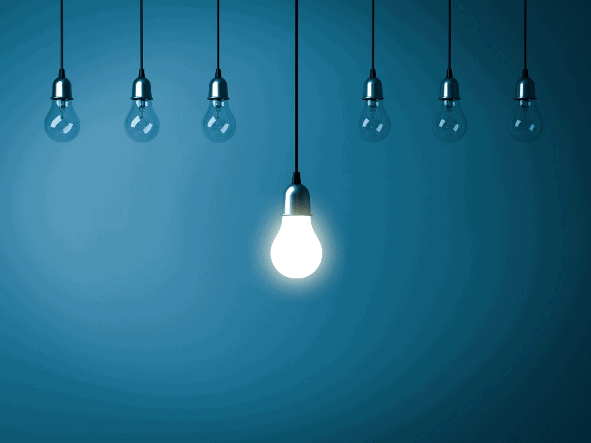

Output Layer Capacity

Let’s compare the output layer of a neural network with that of a pattern-based ML model. Much like a neural network, the output layer of a pattern-based AI system is a collection of nodes,. However, this is where the similarity ends.

As discussed previously, the output node of a neural network is like a dimmable lightbulb, and the node with the highest (brightest) value becomes the winning node. The number of objects that a neural network can detect is limited to the number of nodes in the output layer. If your neural net has 20 nodes in the output layer, you have the capacity to learn up to 20 objects – no more.

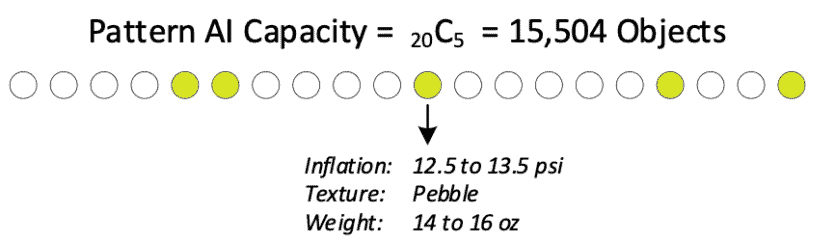

An output node in a pattern-based ML model has a much higher capacity for detecting objects. Rather than each node representing a prediction, we look at the pattern of nodes that are represented in the output layer. A pattern-based system with 20 output nodes, of which 5 are active, has a capacity to detect more than 15,000 objects. In probability theory this is referred to as 20C5 *. Compare this to the minuscule capacity of a neural network with the same number of output nodes and you will begin to understand why pattern-based AI is so powerful.

This 20-node example is quite small. Our pattern-based AI systems are often configured with 100 output nodes or more. In these configurations the number of objects that can be learned and detected becomes astronomical. You can see that pattern-based AI has a much high capacity to learn and detect objects.

Increased learning capacity is critically important to delivering Explainable AI. Classifications in machine learning are often based on subtle differences between inputs. High learning capacity provides a correspondingly large number of ways to express those subtle differences between input classes.

Even better, the critical information describing these subtle differences is delivered directly to the data scientist. Gone are the days of trying to unwind the hidden layers of the neural net in an attempt to understand the subtle differences between classifications.

Higher Capacity Learning means Greater Understanding

While the higher capacity of a pattern-based AI system is a good start, we can go much further. In the image above we see five nodes in the output layer of the pattern-based system have been activated. This exact combination (pattern) of nodes corresponds to a specific object class that was input into the system.

For example, this pattern of active nodes might relate to a football in a system designed to classify sporting goods products. But this is just a pattern of nodes. Where is the explanation for why these nodes have turned on? On what basis did the pattern-based system come to a prediction that this pattern of nodes represents a football?

Here is where the magic begins and why it is so important to preserve the integrity of input data in an AI system. We can look inside each node and understand why it turned on. Because pattern-based AI eliminates the problem of ‘blending’ the input values, we can trace backwards from each output node to the very input data responsible for turning the node on.

Furthermore, each node can represent multiple input values in various combinations. Looking again at the output layer of our pattern-based system, we can see the input conditions that caused that node to turn on by backtracking this output node all the way back to the input values.

This single node is sensitive to input values related to inflation pressure, texture, and weight. Similarly, the other nodes would be related to other properties that are commonly associated with footballs, for example, size (dimensions), color, laces, and overall shape.

Conclusion

The Natural Intelligence pattern-based AI has more capacity and provides greater insights than neural network models. This is a powerful combination that can be used in many different ways to help data scientists understand their AI models better and to increase the confidence in AI systems overall.

Compared to how neural networks destroy input data and the simplistic brightest bulb concept used by neural networks, the power and value of pattern-based AI is clear to see.

* Capacity of system (xCy) = x!/((x-y)!y where x=number of nodes, y=number of active nodes

Download Explainability White Paper

To learn more about Natural Intelligence and Explainable AI, please fill in the form below to download our white paper: